Natural Language Processing in Biomedical Research

Natural Language Processing (NLP) in Biomedical Research involves the application of computational techniques to analyze, understand, and generate human language in the context of biomedical data and information. This field plays a crucial …

Natural Language Processing (NLP) in Biomedical Research involves the application of computational techniques to analyze, understand, and generate human language in the context of biomedical data and information. This field plays a crucial role in extracting valuable insights from vast amounts of text data, such as research papers, clinical notes, and patient records, to support various biomedical applications ranging from drug discovery to clinical decision-making. In this course, we will explore key terms and vocabulary essential for understanding NLP in the context of Biomedical Research.

1. **Tokenization**: Tokenization is the process of breaking down text into smaller units called tokens, which could be words, phrases, or symbols. In NLP, tokenization is a fundamental preprocessing step that helps in preparing text data for analysis. For example, consider the sentence: "The patient presented with fever and cough." Tokenizing this sentence would result in tokens like "The," "patient," "presented," "with," "fever," "and," "cough."

2. **Lemmatization**: Lemmatization is the process of reducing words to their base or root form (lemma). It helps in standardizing words to their dictionary form for analysis. For instance, the lemma of the words "running," "runs," and "ran" is "run." Lemmatization is important in NLP to handle variations of words and improve text normalization.

3. **Stemming**: Stemming is another text normalization technique that involves removing suffixes from words to reduce them to their root form (stem). Unlike lemmatization, stemming may result in non-linguistic stems. For example, stemming the words "running," "runs," and "ran" would all result in "run." Stemming is computationally less expensive than lemmatization but may not always produce meaningful stems.

4. **Named Entity Recognition (NER)**: Named Entity Recognition is a process in NLP that involves identifying and classifying named entities in text into predefined categories such as person names, locations, organizations, and more. In biomedical research, NER is crucial for extracting valuable information from text, such as identifying genes, proteins, diseases, and drug names.

5. **Part-of-Speech (POS) Tagging**: Part-of-Speech tagging is a process of assigning grammatical categories (e.g., noun, verb, adjective) to words in a sentence. POS tagging helps in understanding the syntactic structure of text and is essential for many NLP tasks, including information extraction, sentiment analysis, and machine translation.

6. **Stop Words**: Stop words are common words (e.g., "the," "is," "and") that are often filtered out during text preprocessing because they do not carry significant meaning in the analysis. Removing stop words helps in reducing noise and improving the efficiency of NLP algorithms.

7. **Bag-of-Words (BoW)**: Bag-of-Words is a simple and widely used technique in NLP for representing text data as a collection of words and their frequencies in a document. BoW ignores the order and context of words in a text but captures the overall word occurrences. It forms the basis for many text classification and clustering algorithms.

8. **Term Frequency-Inverse Document Frequency (TF-IDF)**: TF-IDF is a statistical measure that evaluates the importance of a word in a document relative to a collection of documents. It combines the term frequency (TF) of a word in a document with the inverse document frequency (IDF) of the word across all documents. TF-IDF is used to highlight key terms in a document and is commonly used in information retrieval and text mining tasks.

9. **Word Embeddings**: Word embeddings are dense vector representations of words in a continuous vector space. These embeddings capture semantic relationships between words based on their context in a large corpus of text. Word embeddings have revolutionized NLP by enabling algorithms to understand the meaning of words and improve performance in tasks such as text classification, sentiment analysis, and machine translation.

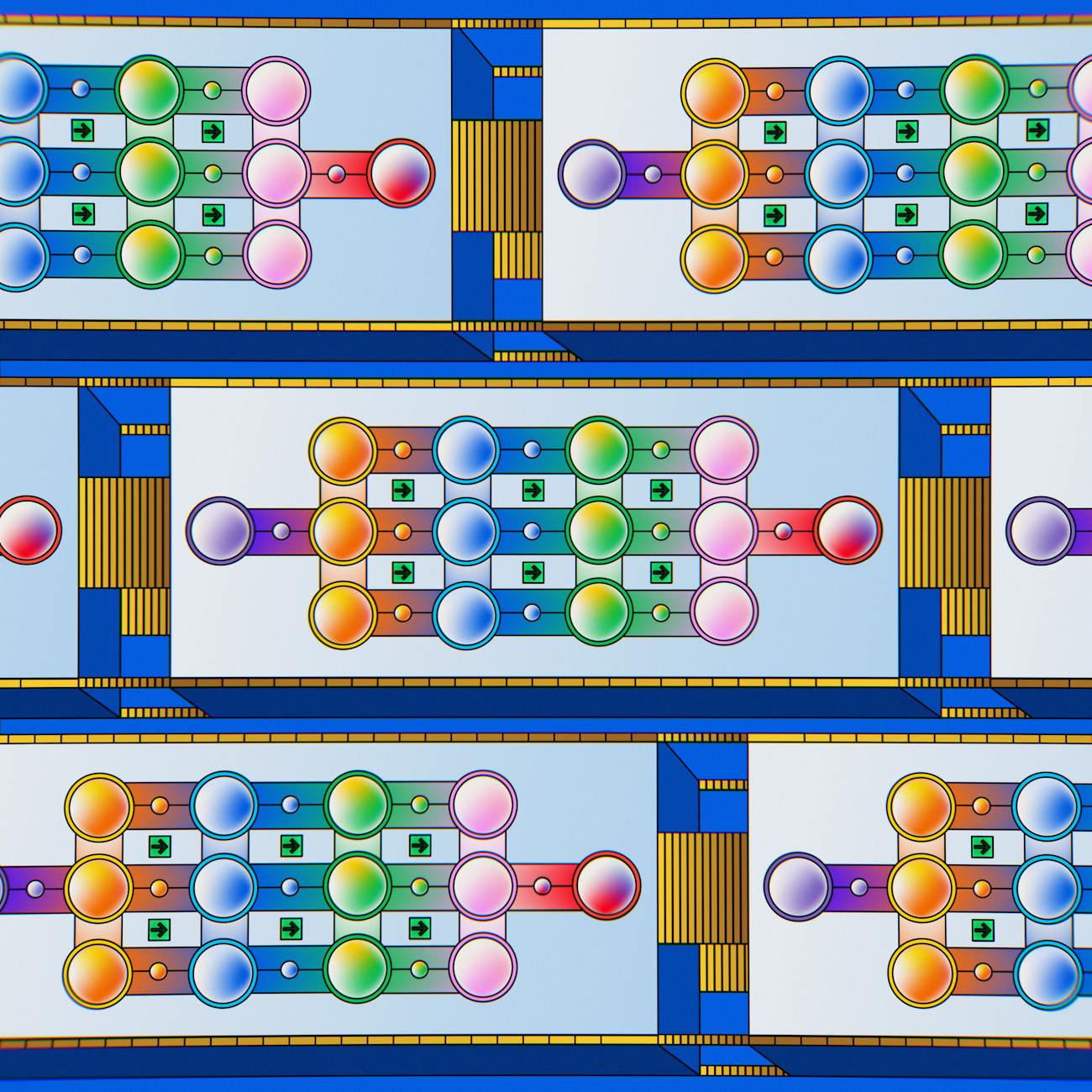

10. **Recurrent Neural Networks (RNNs)**: Recurrent Neural Networks are a type of neural network architecture designed to handle sequential data, such as text. RNNs have memory capabilities that allow them to capture dependencies between words in a sentence. In NLP, RNNs are used for tasks like language modeling, text generation, and sequence labeling.

11. **Long Short-Term Memory (LSTM)**: LSTM is a variant of RNNs that addresses the vanishing gradient problem and enables better learning of long-range dependencies in sequential data. LSTMs have memory cells that can store information over long periods, making them effective for tasks requiring the understanding of context over extended sequences, such as machine translation and speech recognition.

12. **Bidirectional Encoder Representations from Transformers (BERT)**: BERT is a state-of-the-art pre-trained language model developed by Google that has significantly improved the performance of various NLP tasks. BERT uses a transformer architecture and bidirectional training to capture contextual information from both left and right contexts. It has been widely adopted in biomedical research for tasks like named entity recognition, question answering, and text classification.

13. **BioBERT**: BioBERT is a domain-specific variant of BERT that has been fine-tuned on biomedical text to improve its performance on biomedical NLP tasks. BioBERT leverages pre-trained BERT weights and further fine-tunes the model on biomedical text data to enhance its understanding of domain-specific terminology and concepts.

14. **Word Sense Disambiguation**: Word Sense Disambiguation is the task of determining the correct meaning of a word based on its context in a sentence. In biomedical NLP, where terms can have multiple meanings depending on the context, word sense disambiguation is essential for accurate information extraction and interpretation.

15. **Ontology**: An ontology is a formal representation of knowledge that defines the concepts, relationships, and properties within a domain. In biomedical research, ontologies play a crucial role in organizing and standardizing biomedical knowledge, such as diseases, drugs, genes, and biological processes, to facilitate data integration and interoperability.

16. **Natural Language Understanding (NLU)**: Natural Language Understanding is a subfield of NLP that focuses on enabling machines to comprehend and interpret human language. NLU involves tasks such as semantic analysis, entity recognition, and sentiment analysis to extract meaning from text data. In biomedical research, NLU is essential for processing and analyzing vast amounts of unstructured text data.

17. **Text Mining**: Text Mining is the process of extracting valuable information from unstructured text data using computational techniques. In biomedical research, text mining plays a vital role in analyzing scientific literature, clinical notes, and electronic health records to discover new insights, relationships, and patterns that can aid in medical research and healthcare decision-making.

18. **Clinical Natural Language Processing (cNLP)**: Clinical Natural Language Processing is a specialized branch of NLP that focuses on analyzing clinical text data, such as patient records, physician notes, and medical reports. cNLP aims to extract clinical information, support clinical decision-making, and improve healthcare outcomes through the use of NLP techniques tailored to the healthcare domain.

19. **Challenges in Biomedical NLP**: Biomedical NLP poses several challenges due to the complexity and domain-specific nature of biomedical text. Some of the key challenges include dealing with specialized terminology, handling abbreviations and acronyms, addressing ambiguity in medical language, and ensuring the privacy and security of sensitive healthcare data. Overcoming these challenges requires domain expertise, robust algorithms, and careful data preprocessing.

20. **Applications of Biomedical NLP**: Biomedical NLP has a wide range of applications across various domains in healthcare and life sciences. Some common applications include information extraction from biomedical literature, clinical decision support systems, pharmacovigilance for drug safety monitoring, patient phenotyping for precision medicine, and biomedical knowledge discovery through text mining. The use of NLP in biomedical research continues to grow, offering new opportunities for advancing medical science and improving patient care.

In conclusion, understanding the key terms and vocabulary associated with Natural Language Processing in Biomedical Research is essential for anyone working in the field of AI applications in biotechnology. By familiarizing oneself with these concepts and techniques, researchers and practitioners can harness the power of NLP to extract valuable insights from textual data, accelerate scientific discovery, and enhance healthcare outcomes.

Key takeaways

- Natural Language Processing (NLP) in Biomedical Research involves the application of computational techniques to analyze, understand, and generate human language in the context of biomedical data and information.

- **Tokenization**: Tokenization is the process of breaking down text into smaller units called tokens, which could be words, phrases, or symbols.

- **Lemmatization**: Lemmatization is the process of reducing words to their base or root form (lemma).

- **Stemming**: Stemming is another text normalization technique that involves removing suffixes from words to reduce them to their root form (stem).

- **Named Entity Recognition (NER)**: Named Entity Recognition is a process in NLP that involves identifying and classifying named entities in text into predefined categories such as person names, locations, organizations, and more.

- POS tagging helps in understanding the syntactic structure of text and is essential for many NLP tasks, including information extraction, sentiment analysis, and machine translation.

- , "the," "is," "and") that are often filtered out during text preprocessing because they do not carry significant meaning in the analysis.